- A new superstabilizer scheme enables color codes to tolerate manufacturing defects on superconducting quantum processors without disabling neighboring qubits, achieving lower logical error rates than conventional approaches

- Color codes natively support transversal Clifford gates — an advantage surface codes lack — and this defect-adaptive framework removes one of the last practical barriers to their deployment

- Security architects should treat this as a timeline accelerant: fault-tolerant quantum computing with color codes could shift preferred architectures within 3-5 years, compressing your PQC migration window

Why Hardware Defects in Quantum Processors Matter to Your Security Roadmap

Every superconducting quantum processor ships with broken qubits. Manufacturing yields are imperfect, and some fraction of data qubits and ancilla qubits arrive dead on arrival or degrade during operation. Until now, quantum error correction schemes — the foundational layer that makes fault-tolerant quantum computing possible — handled these defects crudely: disable the broken qubit and its neighbors, burning through scarce quantum resources.

This matters to CISOs because fault-tolerant quantum computing is the threshold at which current RSA-2048 and ECC-256 cryptography breaks. Every improvement in error correction efficiency pulls that threshold closer. A new paper published on arXiv (arXiv:2604.05874v1) proposes a systematic framework that makes one of the two leading quantum error correction architectures — color codes — significantly more practical on real hardware. That narrows the gap between theoretical quantum advantage and deployed quantum threats.

What Color Codes Are and Why They Were Falling Behind

Definition: Color codes are a family of topological quantum error-correcting codes defined on trivalent, three-colorable lattices. They encode logical qubits into many physical qubits and detect errors through stabilizer measurements on overlapping groups of qubits. Their defining advantage is native support for a transversal Clifford gate set — meaning logical Clifford gates (H, S, CNOT) can be executed by applying single-qubit operations in parallel, without complex multi-step procedures.

Surface codes, the other dominant topological code family, have received far more engineering attention. Prior research produced systematic defect-adaptive methods for surface codes, allowing processors to route around broken qubits with minimal overhead. Color codes had no equivalent framework. When a qubit failed in a color code lattice, the standard response was to disable neighboring data qubits — a resource-intensive workaround that increased logical error rates and made color codes appear less practical despite their gate advantages.

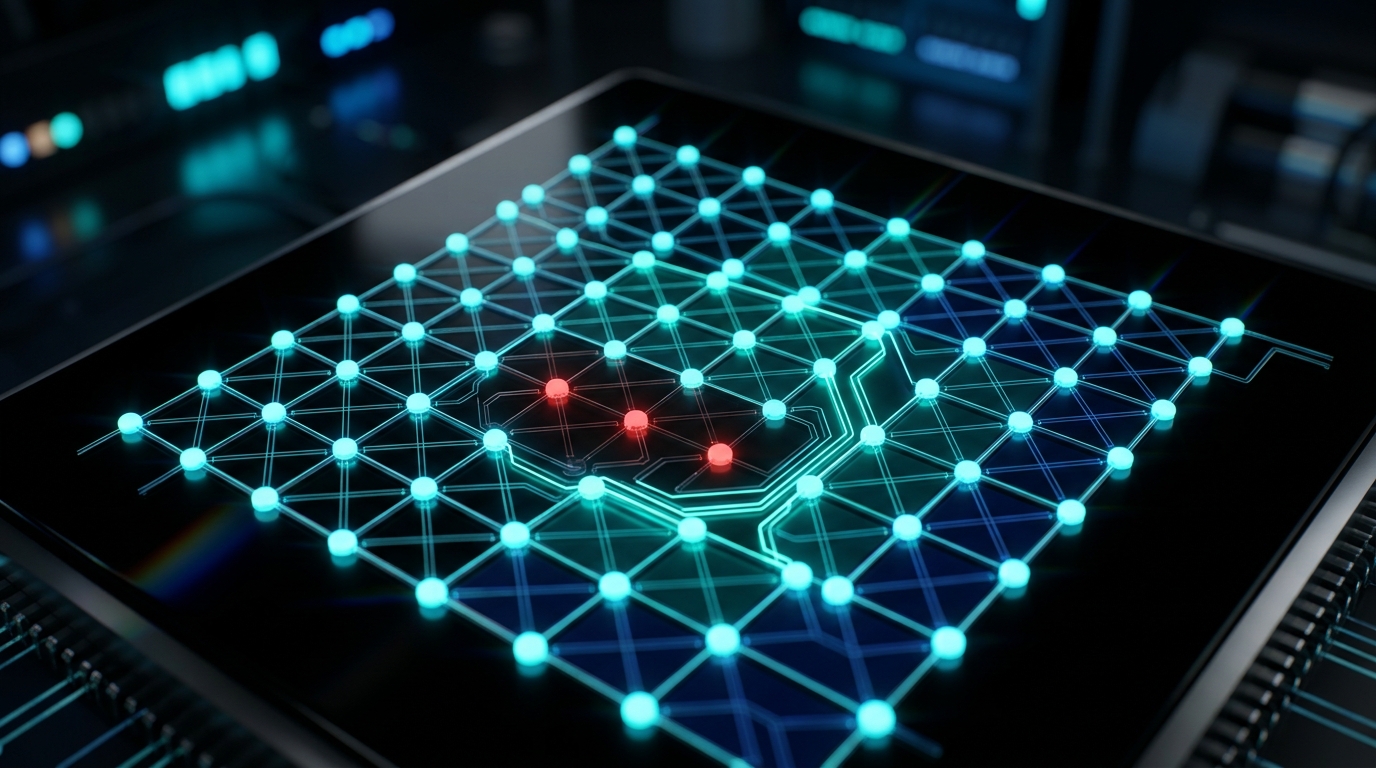

[IMAGE: A square lattice of interconnected quantum processor qubits rendered in deep black with cyan node highlights, showing a damaged node in red being bypassed by glowing teal pathways that reroute around the defect without disrupting adjacent nodes]

| Property | Surface Codes | Color Codes (Pre-Paper) | Color Codes (With Superstabilizer) |

|---|---|---|---|

| Transversal Clifford gates | No (requires magic state distillation) | Yes (native) | Yes (native) |

| Defect-adaptive framework | Yes (systematic) | No (ad hoc) | Yes (systematic) |

| Handling of broken data qubits | Reroute with moderate overhead | Disable neighbors (high waste) | Superstabilizer repair (low waste) |

| Handling of broken ancilla qubits | Established methods | Disable neighbors (high waste) | Two optimized schemes (low waste) |

| Lattice surgery support | Yes | Limited | Yes |

| Logical error rate under defects | Baseline | Higher than surface codes | Lower than conventional color code methods |

Technical Deep-Dive: The Superstabilizer Scheme

The core contribution is a universal superstabilizer scheme that repairs arbitrary stabilizer code lattices when data qubits fail. Rather than treating each defect as an isolated patch job, the framework provides a systematic method applicable to any stabilizer code — then specializes it for color codes on square lattices.

How It Handles Data Qubit Defects

When an internal data qubit fails, conventional approaches disable that qubit and its neighbors, collapsing the local code structure. The superstabilizer scheme instead merges the stabilizers surrounding the defective qubit into a single “superstabilizer” — a larger stabilizer that spans the gap. This preserves the code distance (and thus error correction capability) across the defect site without sacrificing adjacent healthy qubits.

Unlike conventional approaches that directly disable neighboring data qubits and thus cause resource waste, both of our schemes avoid such waste and consequently achieve a lower logical error rate. — From the paper abstract, arXiv:2604.05874

Two Optimization Paths for Ancilla Qubit Defects

Ancilla qubits perform the stabilizer measurements that detect errors. When an ancilla qubit fails, the system loses visibility into errors in that region. The paper proposes two distinct repair strategies:

- Ancilla reuse scheme: Neighboring ancilla qubits absorb the measurement responsibilities of the failed ancilla, performing additional stabilizer checks during their measurement cycles

- iSWAP gate scheme: Employs iSWAP gates — a native two-qubit gate on many superconducting platforms — to reroute measurement circuits around the defective ancilla

Both schemes achieve the same critical property: no neighboring data qubits are disabled. This directly translates to lower resource overhead and better logical error rates compared to the disable-and-patch approach.

Defect Clusters and Architectural Completeness

Real processors don’t have single isolated defects — they have clusters. The paper constructs a comprehensive defect-adaptive architecture that handles arbitrary defect configurations across the lattice, not just isolated single-qubit failures. Combined with verified support for lattice surgery (the standard method for performing non-transversal logical operations between code blocks), this gives color codes a complete fault-tolerant operational toolkit.

The combination of transversal Clifford gates and lattice surgery support means color codes with the superstabilizer scheme can perform universal quantum computation on defective hardware — the same operational completeness that made surface codes the default choice for quantum computing roadmaps.

What This Means for the Cryptographic Threat Timeline

The path to cryptographically relevant quantum computers runs through fault-tolerant quantum error correction. Today, surface codes dominate hardware roadmaps at Google, IBM, and other major players because they had the most mature engineering toolchain — including defect handling. This paper removes one of color codes’ key practical disadvantages.

Near-Term Impact (1-2 Years)

Quantum hardware teams gain a systematic framework for deploying color codes on real superconducting processors. This could improve effective yield rates — the percentage of fabricated qubits that contribute to useful computation — by avoiding the cascade of disabled qubits that conventional defect handling caused. Expect color code experiments on near-term processors within this window.

Medium-Term Impact (3-5 Years)

As quantum processors scale to thousands of physical qubits, defect rates compound. A framework that handles defects without resource waste becomes a scaling enabler. Color codes’ native transversal Clifford gates eliminate the need for magic state distillation — a resource-intensive process that surface codes require for the same gate set. The overhead savings at scale could make color codes the preferred architecture for fault-tolerant systems.

Long-Term Impact (5+ Years)

If color codes become the dominant error correction architecture, fault-tolerant quantum computing timelines compress. The transversal gate advantage reduces the total physical qubit count needed for a given logical computation. For cryptographic attacks specifically, this means the qubit threshold for breaking RSA-2048 or solving discrete logarithm problems relevant to ECC could be reached with fewer physical resources than surface-code-based estimates suggest.

The BeQuantum Perspective

At BeQuantum, we track quantum error correction advances as direct inputs to our threat modeling. Our PQC Layer implements NIST-standardized algorithms (ML-KEM, ML-DSA, SLH-DSA) precisely because the timeline to fault-tolerant quantum computing remains uncertain — and papers like this one are the mechanism by which that uncertainty resolves toward “sooner.”

The superstabilizer scheme is theoretical — no experimental validation on hardware exists yet, no specific qubit counts were benchmarked, and no defect density thresholds were reported. These are real gaps. But the systematic nature of the framework means it’s engineerable: hardware teams can implement it without waiting for further theoretical breakthroughs.

Our Digital Notary architecture assumes a crypto-agile posture — document signatures that can be re-anchored to stronger algorithms as standards evolve. The IceCase hardware wallet implements hybrid classical-PQC key management for exactly this class of scenario: when a theoretical advance signals that migration timelines may need to accelerate, organizations using crypto-agile infrastructure can respond in weeks rather than years.

What You Should Do Next

-

Within 30 days: Inventory your organization’s cryptographic dependencies. Identify every system using RSA, ECC, or Diffie-Hellman key exchange. If you haven’t started this audit, this paper is another data point confirming you’re behind schedule.

-

Within 90 days: Evaluate your PQC migration readiness against NIST’s published timelines. NIST has deprecated 112-bit security parameters and targets full transition away from vulnerable algorithms. Map your TLS certificate chains, VPN tunnels, and code-signing infrastructure against PQC-ready alternatives.

-

Within 180 days: Deploy hybrid key exchange (classical + PQC) on your highest-value data flows. Harvest-now-decrypt-later attacks mean data encrypted today with vulnerable algorithms is already at risk from future quantum computers — and “future” keeps getting closer.

Frequently Asked Questions

Q: Does this paper mean quantum computers can break encryption sooner than expected?

A: Not directly. This paper improves the engineering feasibility of one quantum error correction architecture (color codes), which is a prerequisite technology for fault-tolerant quantum computing. No one is breaking RSA with this result tomorrow. However, it narrows the practical gap between theoretical quantum algorithms and deployable quantum hardware, which means cryptographic threat timelines should be reassessed.

Q: What’s the difference between color codes and surface codes for security planning purposes?

A: Both are topological quantum error-correcting codes that enable fault-tolerant quantum computing. Surface codes are more mature in engineering practice but require expensive magic state distillation for Clifford gates. Color codes perform Clifford gates natively (transversally) but previously lacked robust defect handling. This paper closes that gap, potentially making color codes more resource-efficient at scale — which could reduce the total physical qubit count needed for cryptographically relevant computations.

Q: Should this change my PQC migration timeline?

A: Not in isolation, but it should reinforce urgency. Each advance in quantum error correction removes an engineering obstacle between today’s noisy quantum processors and tomorrow’s fault-tolerant machines. If your migration plan assumed surface-code timelines as the baseline, color code improvements represent a parallel path that could arrive faster. The prudent response is to treat your current migration deadline as a ceiling, not a target.

Last updated: April 2026. Based on analysis of arXiv:2604.05874v1.